Named Entity Recognition In Malayalam

What is named entity recognition?

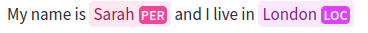

Starting with the most obvious question, what exactly is Named Entity Recognition? Named Entity Recognition (NER) is a natural language processing (NLP) technique that involves identifying and categorizing named entities within a given text. Named entities refer to specific words or phrases that represent real-world objects such as people, organizations, locations, dates, quantities, and more. NER systems aim to automatically extract and classify these entities to understand the underlying structure and meaning of a text. The following is an illustration of NER in English from this huggingface space which uses a bert-base model.

When we approach this task in Malayalam we are faced with certain challenges that arise due to the inherent nature of the language. In this blog post, I’ll be describing the various deep learning approaches that I experimented with and offer my explanation as to why these work.

All the code and models that resulted from this work is available at

Why is NER in Malayalam Challenging?

The first and fundamental problem is the availability of data. This is mainly due to the limited digital footprint when compared to English. This makes scraping data from the web difficult as they do not yield data in sufficient quantities. Recently however, the AI4Bharat initiative by IIT Madras released a large dataset named Naamapadam, which is currently the largest publicly available NER dataset for a large collection of indic languages. I’ll be expanding more on the dataset further down the blog.

Malayalam, like other Dravidian languages, exhibits intricate word forms and affixation patterns, making it challenging to identify and classify named entities accurately. One aspect of morphological complexity in Malayalam is the phenomenon of sandhi, which involves the fusion or alteration of word boundaries when words are combined. Consider the words “ആനയും” (aanayum) and “ഉണ്ടെന്ന്” (undennu), which mean “elephant” and “exists” respectively. When these words are combined, a sandhi rule comes into play, resulting in changes to the word boundaries and phonetic forms. The combination of “ആനയും” (aanayum) and “ഉണ്ടെന്ന്” (undennu) results in “ആനയുമുണ്ടെന്ന്” (aanayumundennu), meaning “elephant exists.” Recognizing named entities that undergo sandhi requires the NER system to handle these changes accurately, segmenting and recognizing the individual words within the combined form.

Another challenge arises from the extensive use of inflectional suffixes and prefixes in Malayalam. Words in Malayalam can undergo modifications to indicate tense, gender, number, case, and other grammatical features. For example, consider the word “കാരന്” (kaaran), which means “person” in Malayalam. The word can undergo various changes depending on the grammatical context. In the plural form, it becomes “കാരന്മാര്” (kaaranmaar), and in the genitive case, it becomes “കാരന്റെ” (kaarante). Recognizing the different inflected forms of a named entity requires the NER system to handle these variations and understand the underlying morphological rules.

Additionally, the use of placenames, honorifics and titles in Malayalam further adds to the morphological complexity. Respectful and honorific terms are commonly used in addressing individuals, especially in formal contexts. These honorifics may modify or precede the names of individuals, requiring the NER system to understand their variations and incorporate them into the recognition process.

The Dataset

As mentioned earlier, I used Naamapadam, by AI4Bharat here. It contains 717k sentences in the train split, 3.62k in the validation split, and 974 sentences in its test split. It contains person (PER), location (LOC), organization (ORG), and Other(O) as entities. The way the authors generated this large dataset is clever. They took Samanantar which is a parallel corpus of English and Indic languages. They tag the English side of this corpora using a high-quality off-the-shelf NER model. Then they align the words using learned word alignment approaches to align the English words with their indic counterparts and they ended up with decent-sized tagged corpora for NER.

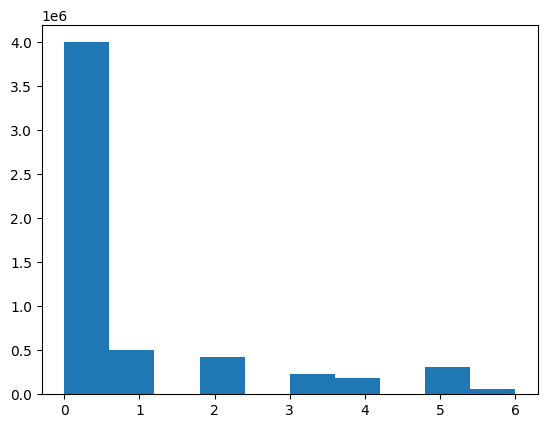

Before modeling the dataset, I was curious to find out the distribution of the tags. So I did a little bit of visualization and found severe imbalances, which are shown below.

I apologize for the numerical classes, here’s the map ( 0 = Other, 1 = B-PER, 2 = I-PER, 3 = B-ORG, 4 = I-ORG, 5 = B-LOC, 6 = I-LOC ), B = Beginning, I = Inside.

The O (Other) class is very dominant in count compared to the rest of the classes. So we’ll need to explore some methods to handle that as well. I’ll be explaining my approach to solving this further down the blog.

Preprocessing

Certain standard NLP preprocessing steps were applied to the sentences before they were modeled. All the punctuation was removed. The tags were realigned to account for the splits that occur when the word is tokenized. The code for all the preprocessing steps is available in my GitHub repository.

Tokenization

I have chosen to use byte pair encoding (BPE) here. It has proven to be powerful since its debut in the original GPT paper. BPE splits the text into individual characters and then iteratively merges the most common pairs. So most frequently occurring pairs get merged and have their tokens. This provides a perfect middle ground between character-level and word-level tokenizations. Now this method does not account for sandhi, this is one area of research that might be fruitful to all Malayalam nlp tasks. I used a pre-trained tokenization and vectorization from BPEmb.

Model Architectures

I evaluated through three main architectures, namely RNN, LSTM, and Transformer. Out of the three LSTM and Tranformers gave the best results. Both BiLSTM and Transformer models are available on my github page

RNN

Since NER is a sequence modeling task, my first choice was the RNN. I also wanted to get a sense of how it performed with RNNs before moving forward with other model architectures. I used a 3 layers RNN with ReLU activation and a dropout of 0.3. During the first epoch of training, the loss was going down and everything was going the way it should be. But around halfway into the epoch, the loss spiked suddenly. I suspected the learning rate might have been too high and experimented with various values, but the issue persisted. The next place I went looking was at the gradients of the layers. This is when I realized that the gradient values were too big and I encountered “Exploding gradients”. Then I explored ways to eliminate the numerical stabilities in the model.

I modified the weight initialization strategy, the weights were initialized randomly at first. I then moved to use Kaiming Normal Initialization. In Kaiming Normal initialization, the weights of each layer are sampled from a normal distribution with zero mean and variance calculated based on the fan-in of the layer. The fan-in represents the number of input connections to a neuron or a layer. The variance is adjusted to ensure that the signals neither vanish nor explode as they propagate through the network. this delayed the exploding gradients but did not solve the problem fully.

Another thing that was missing from my initial RNN model was a normalization layer. So I added a layer norm layer from Pytorch. The reasoning was the normalization layers scale the activations and keep them in a desired range so that they neither explode nor vanish. But in my case, it did not solve the problem.

BiLSTM

Which is when I moved onto LSTM with the same configurations as the RNN. This move immediately solved all of the numerical instabilities as expected and the model was successfully trained. Which is when the thought of experimenting with BiLSTM came about. During my literature survey before embarking on this project, I observed that BiLSTMs were quite popular for NER in many other languages. I went ahead and trained a BiLSTM with the same data and obtained a well-trained model which has an F1-score of 0.96 on the test set and test loss of 0.09 and only 2.49M trainable parameters.

Transformer

Transformers have been in the limelight ever since they first debuted back in 2017. It became even more popular when Large Language Models (LLM) like BERT and GPT came along both of which use the encoder and decoder parts of the transformers respectively. The architecture of the transformer especially with the self-attention layer provides a global context of the incoming vectors.

The architecture I chose was a two-layer encoder-only transformer model, which was trained from scratch. The model did not exhibit any numerical instabilities and it was successfully trained. This obtained an F1-score of 0.98 on the test set and a test loss of 0.05 and has 4.25M trainable parameters. This model performs slightly better than BiLSTM on some corpus, overall the transformer model is still preferred for high levels of accuracy.

How I adressed the tag imbalances?

Imbalanced datasets are not a new phenomenon in machine learning. All the real-world datasets are often very imbalanced. This topic has been talked about and researched quite a lot and as a result of those several well-defined approaches exist to solve the class imbalance problem. Oversampling is one method that increases the no. of data points of the underrepresented class. Undersampling is another method that reduces the no. of data points of the overrepresented class.

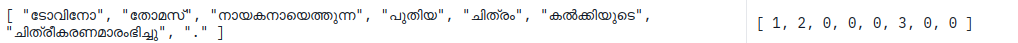

However, these methods cannot be applied here directly. Let’s take a look at a sentence from the dataset.

Undersampling or oversampling would mean removing some words from the dataset. This would not make any sense as the sentence will be deformed when we do that, essentially we’ll be removing our features and that’s never good when we’re modelling. This constraint turned my focus to weighted loss functions.

The loss function I used was the very familiar cross-entropy loss, but with a slight modification, I provide weights to each of the classes manually. So, overrepresented classes, are scaled-down and underrepresented ones are scaled up, this helps the learning by focusing lesser on the overrepresented classes and focusing more on the underrepresented classes.

Concluding Thoughts and Future Work

The transformer model shown here achieves the best result so far based on F1-score (open to discussion and further tests). However, some more things need to be addressed. First of all, even though sandhi was mentioned I did not make any active efforts to address it presently. Some methods that come to my mind are to just split these words in the corpus using a sandhi splitter and then proceed with tokenization, this method needs to be evaluated.

Text augmentation was one avenue that I wanted to explore, but practically it became a bit tedious since some sentences became grammatically incorrect. When addressed it might give more generalization power to the models.

The weights to the weighted loss function were assigned by me manually, but a more systematic way of assigning weights might yield better performance. One idea I had in mind was to have learnable weights, but whether it’s a sane idea or not is subject to speculation.

I encountered a paper that shows that training a multilingual NER model can improve the performance of the model even further. So training a multilingual NER model using the same architecture on all the languages provided by the dataset is a very plausible idea that I am working on currently.

Once these flaws are addressed we will have a very performant model for NER tasks in Malayalam.